Your organization is investing in AI. The business case is real. The pressure to move is real. And somewhere in the back of your mind, is this question: what happens if the platform we’re building on gets acquired, deprecated, or outcompeted in two years?

It is a legitimate concern, and in the current AI market, it is not hypothetical. Vendors are pivoting. Models are being deprecated. Pricing structures are shifting. Regulatory requirements are tightening. The organizations that are winning with AI are not the ones who picked the right tool today. They are the ones who built the management discipline to adapt continuously without starting over.

For mid-market companies, the stakes are higher than they are for large enterprises. There is no deep capital reserve to absorb a wrong bet. When an AI infrastructure investment fails to deliver or becomes obsolete ahead of schedule, the cost is felt directly in budget, in time, and in competitive ground lost to organizations that got it right. Getting the strategy right is not a preference. It is a business necessity.

This post introduces a planning strategy called future-proofing your AI infrastructure, a continuous lifecycle management approach that helps CEOs, COOs, and CAIOs protect their AI investments, maximize flexibility, and manage change deliberately rather than reactively.

What Causes AI Solution Obsolescence?

An AI solution, whether deployed today or in the future, can become functionally obsolete for a range of reasons. Unlike more established enterprise technologies, the immature and fast-evolving nature of AI solutions amplifies the risk of early obsolescence significantly.

Consider the current state of the market. There are now hundreds of large language models and AI platforms competing for enterprise adoption, from hyperscaler offerings by Microsoft, Google, Amazon, and Anthropic, to a rapidly expanding field of specialized vertical platforms and open-source alternatives. Standards are still forming. Pricing models are shifting. Vendors are acquiring, pivoting, and in some cases disappearing. The model that leads the benchmark today may be superseded within months.

At the regulatory level, the EU AI Act is already in force, and similar legislation is advancing in multiple jurisdictions. AI-specific cybersecurity threats such as prompt injection, data leakage, and model poisoning are evolving faster than most enterprise security frameworks can track.

Figure One summarizes the primary causes of AI solution obsolescence that mid-market organizations should account for in their planning.

| Cause of obsolescence | AI-specific examples |

| Outdated technology | New/better AI models; rapid standards evolution; model fragmentation across vendors |

| Evolving use cases | New AI application scenarios; changing business requirements and user needs |

| Supplier decision | Vendor pivots, acqui-hires, or shutdowns; planned model deprecations |

| Regulatory and legal | EU AI Act, data privacy laws, evolving compliance requirements |

| Security and safety | Prompt injection, data leakage, evolving AI-specific cybersecurity threats |

| Low market acceptance | Failed adoption; poor change management; competing de facto standards |

| Poor economics | Runaway compute/token costs; high TCO; low or unmeasurable ROI |

| Lack of support and expertise | AI talent scarcity; limited vendor support; specialized skills required |

The core challenge is not just that these risks exist. AI obsolescence moves faster and less predictably than most enterprise technology transitions. A structured response is not optional. It is the price of a sustainable AI investment strategy.

What Does Future-Proofing Mean in the AI Era?

Future-proofing AI infrastructure is not about predicting which model or platform will win. It is not about waiting for the market to consolidate before investing. That moment will not arrive for years, and organizations that wait will fall further behind. It is also not about overbuying technology now to avoid buying again later.

Future-proofing is a solution lifecycle management strategy. It is a continuous process to maximize flexibility and optionality, while making deliberate investment choices and managing risk at the portfolio level rather than the initiative level.

In the AI era, this definition carries one critical addition that did not exist in earlier technology cycles: your proprietary data is your most durable, model-agnostic asset. Models will change. Vendors will consolidate. Platforms will evolve. But a well-governed, high-quality data foundation covering your knowledge graphs, RAG pipelines, training datasets, and data lineage outlasts any specific technology decision you make today. Organizations that invest in data infrastructure as the foundation of their AI strategy are building something no vendor transition can take away.

What Does a Future-Proof AI Infrastructure Look Like?

Before applying a future-proofing framework, it helps to define what a future-proof AI infrastructure actually needs to deliver. At a high level, five characteristics define the target:

- Usable: the infrastructure and solutions deliver measurable business outcomes today, with no material loss in performance, security, or service levels over the desired planning horizon. Technical capability without business utility is not future-proof; it is shelf-ware.

- Scalable: the infrastructure supports growth in use cases, users, data volumes, and AI complexity over time. What works for a five-person team today should not require a complete rebuild when it needs to serve a larger organization.

- Supportable: technical, performance, and reliability issues can be resolved. This means vendor stability, documented SLAs, model versioning commitments, and clear escalation paths at every layer of the stack.

- Changeable: the infrastructure avoids lock-in and facilitates migration to updated solutions on the organization’s schedule, not the vendor’s. This is the characteristic most often sacrificed in the rush to deploy, and the one most painful to recover when it is missing.

- Economical: the total cost of ownership stays within forecasted ranges. In AI infrastructure, this means accounting not just for initial deployment costs, but for ongoing compute and token costs, licensing fees, retraining costs, and transition expenses as the market evolves.

These five characteristics form the evaluative criteria for every layer of the AI infrastructure stack. They apply equally to a data pipeline and a governance framework.

A Framework for Future-Proofing Your AI Infrastructure

Change in the AI landscape is constant and cannot be avoided. The driving principle behind future-proofing is managing change, not preventing it. Every AI solution has a useful functional life. What is valuable today may be obsolete and discarded in three years. A well-designed future-proofing plan gives the organization options and flexibility rather than lock-in and risk. It prevents suboptimal decision-making by managing the AI infrastructure as a portfolio, not as a collection of independent point decisions.

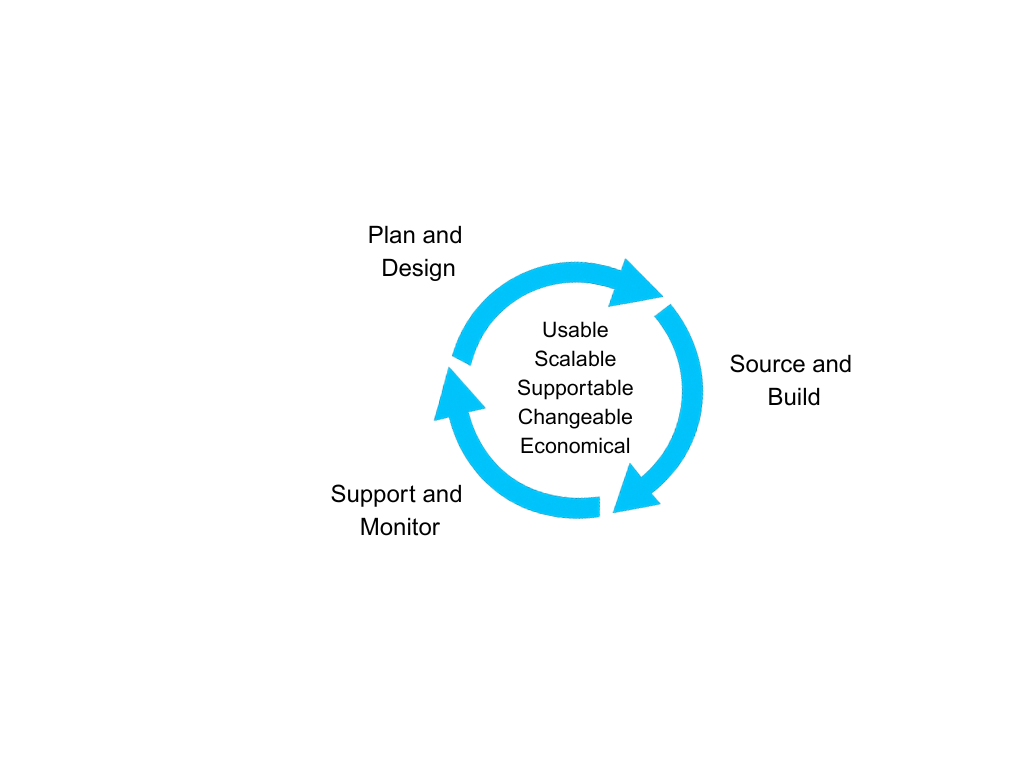

Future-proofing your AI infrastructure is a three-step process, shown in Figure Two. It is not a one-time exercise. It must be revisited annually to remain relevant as the market, regulatory environment, and your own business needs evolve.

Step 1: Plan and Design

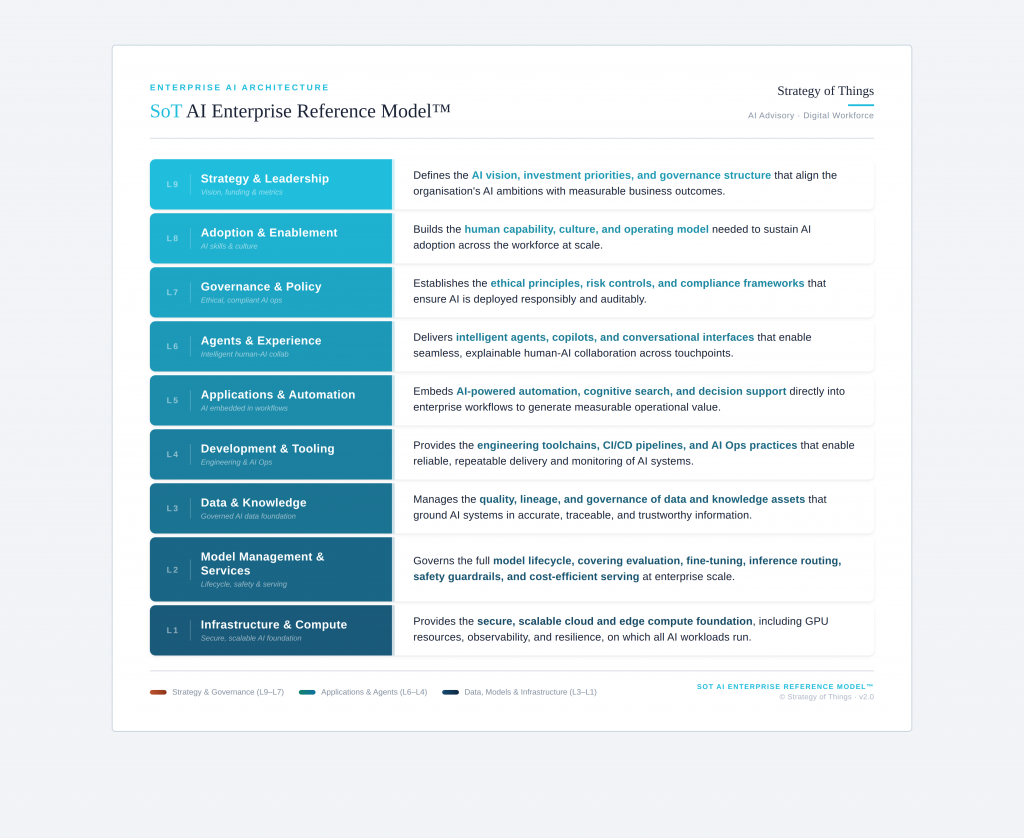

The first step is to identify and place the various components of your AI infrastructure into one of nine actionable categories (layers) using the SoT AI System Framework (Figure Three). These nine categories are then grouped into three broad bands for planning purposes – Data, Models & Infrastructure (L1–L3); Tools, Applications & Agents (L4–L6); and Strategy & Governance (L7–L9).

Once the mapping is completed, the components within each band are then examined from three replacement scenarios:

- Do Not Futureproof: infrastructure elements that should not be future-proofed. These are components that you want to or must replace within the next year.

- Transition When Convenient: infrastructure elements that you are planning to replace within the next three to five years.

- Future-proof: infrastructure elements that you want to keep using long-term and need to protect and actively manage.

The components are then mapped into one of three scenarios. What components gets mapped into which scenario is dependent on your company’s unique needs, capabilities and business strategy. Each band may have components that span all three scenarios. An illustrative example is shown in Figure Four.

| SoT AI Framework Band | Short term (1 year): Do Not Futureproof | Medium Term (3 to 5 years) Transition When Convenient | Long Term (5+ years) Future-proof |

| Data, Models & Infrastructure (L1–L3) | Ad hoc data experiments; single-use model API calls | Current LLM API integrations; early AI Ops tooling | Proprietary data pipelines; cloud/edge compute infrastructure; data quality & governance frameworks |

| Applications & Agents (L4–L6) | One-off summarization tools; short-term chatbot pilots; experimental agent prototypes | Single-vendor workflow automation; department-level AI applications | Customer-facing AI agents embedded in core processes; enterprise cognitive search |

| Strategy & Governance (L7–L9) | Ad hoc AI policies; informal steering groups | Adoption & enablement programs. Keep flexible as capabilities evolve. | AI governance & compliance frameworks (L7); AI investment strategy & portfolio structure (L9) |

The classification of each AI initiative or infrastructure component is determined in conjunction with IT, operations, and the relevant business units. Key considerations include:

- Model portability and data ownership: can you move your data and workloads if the vendor changes?

- Business criticality: what is the operational and revenue impact if this capability becomes unavailable?

- Regulatory exposure: does this layer carry compliance obligations that require long-term governance investment?

- Switching costs: what are the contractual, technical, and organizational costs of transitioning to an alternative?

- AI vendor lock-in risk: how deeply does this initiative embed you in a single vendor’s proprietary stack?

A few principles are worth highlighting as you work through the categorization. Your data foundation, including the pipelines, knowledge assets, and governance structures that underpin all AI activity, warrants the most rigorous future-proofing. These investments carry the highest switching costs, the longest useful lives, and the greatest business risk if they become inaccessible. They are also the one part of your AI infrastructure that outlasts any specific model or vendor decision you make today.

Your AI applications and agents sit in the middle. They matter operationally, but the pace of change in this space means over-engineering for permanence carries its own risk. Build on open standards and interoperable APIs, and avoid deep dependency on any single vendor’s proprietary integration layer.

Your governance and strategy layer is split. Your AI governance framework, covering compliance structures, audit trails, and responsible use policies, must be future-proofed now, given the regulatory trajectory. The cost of retrofitting governance onto a deployed AI system is significantly higher than building it in from the start. Your workforce adoption programs and training, by contrast, should stay flexible; locking in rigid structures too early will backfire as AI capabilities evolve. And your AI investment strategy sets the conditions for everything below. Getting this right is the precondition for everything else.

Step 2: Source and Build

Once categorization is complete, the second step is to procure and build the necessary solutions. The distinction between sourcing and buying matters here. Buying is transactional. Sourcing is strategic. It is about ensuring durable access to critical capabilities over the planning horizon.

For AI infrastructure, a future-proof sourcing strategy includes several specific practices:

- Own your data foundation: prioritize cloud-agnostic architecture and data portability. Own your data pipelines outright. Avoid architectural decisions that make your data inaccessible without a specific vendor’s tooling. This is the single most important sourcing decision you will make.

- Protect your model investments: negotiate fine-tuning rights and model output ownership in vendor contracts before deployment begins. Avoid training on proprietary models where you cannot export results independently. Prefer open-weight models or contractual portability provisions where strategically important.

- Build AI applications on open standards: use interoperable APIs and structure vendor contracts with exit provisions and data return obligations. Avoid building mission-critical workflows on undocumented or proprietary integration layers that a vendor could change or discontinue.

- Establish governance before you sign: set your AI governance and compliance requirements before signing vendor agreements, not after deployment. Retrofitting compliance onto a live AI system is far more expensive and disruptive than building it in from the start.

Build-vs-buy-vs-partner decisions should trace directly back to the categorization in Step 1. What is in the Future-Proof column warrants investment in owned capabilities or robust contractual protections. What is in the Do Not Future-Proof column should be sourced for speed and cost, not durability.

Step 3: Support and Monitor

The third step is to keep the AI infrastructure operational, performant, and aligned with business needs over the planning horizon. In AI infrastructure, this is meaningfully different from traditional IT support. The pace of change means that passively maintaining the status quo is itself a form of obsolescence risk.

Effective support and monitoring practices include:

- Monitor your models continuously: track for performance degradation, output drift, and cost efficiency. Establish retraining and re-evaluation cadences before deployment, not as an afterthought when results start declining.

- Audit your data regularly: data quality, lineage, and knowledge freshness require ongoing attention. Data debt compounds faster than technical debt in AI systems. Stale or degraded inputs will erode model performance silently, often long before anyone notices.

- Track vendor roadmaps actively: deprecation notices, pricing changes, and capability shifts from AI vendors can arrive with limited warning. Maintain deployment practices that are model-agnostic so that a vendor change does not require rebuilding from scratch.

- Review agent performance as capabilities evolve: AI agents that operate within acceptable parameters today may require recalibration as underlying models change. Human oversight mechanisms should be reviewed regularly, not set once at launch.

- Keep governance current with regulation: the EU AI Act and equivalent legislation require ongoing compliance reviews, not a one-time certification. Treat your governance framework as a living operational capability, not a document that gets filed and forgotten.

The most important investment in this step is internal AI competency. Organizations that outsource all institutional knowledge about their AI infrastructure to vendors are systematically exposed to transition risk. Building internal expertise, even at a basic level, at each layer of the stack reduces dependence and improves the organization’s ability to make informed decisions as the market evolves.

The Organizations That Win Won’t Be the Ones Who Picked the Right Model Today

They will be the ones who treated AI infrastructure as a managed portfolio, directed, governed, and actively lifecycle-managed, rather than a series of one-time purchases. Future-proofing is not about achieving permanence. It is about building the capacity to adapt continuously, at manageable cost, on your own terms.

The following diagnostic and action steps are a practical starting point. Use them before your next AI infrastructure investment decision, and plan to revisit them every year.

Is Your AI Infrastructure Future-Proof? A Self-Assessment

Answer these questions honestly. If most of them are difficult to answer, or if the answers differ depending on who in your organization you ask, your AI infrastructure may be more exposed than you realize.

- If your primary AI model or platform vendor announced end-of-life or a major pricing restructure tomorrow, do you have a transition plan? Or would your team be starting from scratch?

- Do you own your data pipelines, training assets, and model outputs outright? Or does your vendor effectively control access to them?

- Do your AI vendor contracts include data portability provisions, model output ownership clauses, and exit terms? Or did those conversations never happen before you signed?

- When you evaluate a new AI investment, do you assess the full five-year total cost of ownership, including compute, retraining, transition, and talent costs? Or just the initial deployment budget?

- Is your AI governance framework documented, current, and keeping pace with regulatory developments? Or is compliance being handled reactively as issues arise?

- Can you identify right now which AI investments in your portfolio are critical enough to future-proof versus which ones you can afford to replace? Or is everything being treated the same?

- When did you last conduct a structured, cross-functional review of your entire AI infrastructure portfolio, not just individual initiatives, but the portfolio as a whole?

What You Can Do Now

Building a future-proof AI infrastructure does not require a complete overhaul before you can start. These steps allow you to assess your current state and take the first practical actions, regardless of where you are in your AI journey.

- Map your current AI portfolio against the three framework bands: data and infrastructure, applications and agents, and strategy and governance. Identify which investments exist in each band, who owns them, and how they are currently being funded. If this exercise is harder than it should be, that itself is a signal.

- Audit your vendor contracts for data portability, model output ownership, and exit provisions. For any critical AI investment that lacks these protections, prioritize addressing the gap before renewing or expanding the engagement.

- Evaluate your top three AI investments against the five characteristics: Usable, Scalable, Supportable, Changeable, and Economical. Be honest about where each one falls short. The gaps you identify are your near-term future-proofing priorities.

- Assess your AI governance framework against current and anticipated regulatory requirements. If it was built more than twelve months ago and has not been reviewed since, treat it as out of date until proven otherwise.

- Schedule an annual future-proofing review with IT, operations, and your key business unit leads. Put it on the calendar now. The organizations that build this discipline early, before a vendor change, a regulatory development, or a failed transition forces it, are the ones that compound their AI advantage over time.

If the diagnostic questions or action steps surfaced gaps you recognize, we would welcome a conversation about what a future-proofing strategy would look like in your organization. Whether you are making your first significant AI infrastructure investment or rationalizing a portfolio of existing ones, the starting point is the same: the right structure, designed for where you are today.

Reach out to us at Strategy of Things. We would be glad to help.

This article is part of a continuing series aimed at providing senior leaders and managers with a practical working knowledge of artificial intelligence and how to manage it as a business capability. This article was originally published at Strategy of Things AI (SoT.ai) website.

Thanks for reading this post. If you found this post useful, please share it with your network. Please subscribe to our newsletter and be notified of new blog articles we will be posting. You can also follow us on Twitter (@strategythings), LinkedIn or Facebook.

Related posts:

The AI Build–Buy–Partner Decision: A Strategic Framework for Executives

AI Is Everywhere. Enterprise Impact Isn’t. Here’s the Structure That Closes the Gap.