The SoT AI Enterprise Reference Model™ in practice: how a nine-layer enterprise AI framework drives every recommendation we make.

QUICK RECAP FROM PART 1

Most AI programs fail not because of the technology but because of missing structure. AI is a business capability, not a technical one. Technical and organizational conditions are inseparable: you cannot have enterprise-wide AI value without both. The SoT AI Enterprise Reference Model™ organizes the full capability stack into nine layers across three zones, inspired by the OSI model’s principle of layered abstraction. Each layer is a distinct cluster of capabilities with its own skills, tools, and approaches. All nine must be present for AI to deliver sustained enterprise value.

A diagnostic without a direction is just a score. Here is how the model becomes action.

Part 1 introduced the SoT AI Enterprise Reference Model™, explained the logic behind its nine layers, and established why technical and organizational capabilities must be treated as a single, inseparable system. If you have not read Part 1, that is the right place to start.

This post picks up where Part 1 ended. The model is a diagnostic structure. It tells you where you are. What it does not yet answer is: what do you do with that picture? How does knowing your Layer 3 maturity change a vendor decision? How does a weak Layer 8 score affect which use cases you should prioritize? How do the nine layers connect to the operating structure that turns assessment into enterprise-wide impact?

Those are the questions Part 2 answers.

The model tells you where you are. The AI Operating Function is how you move.

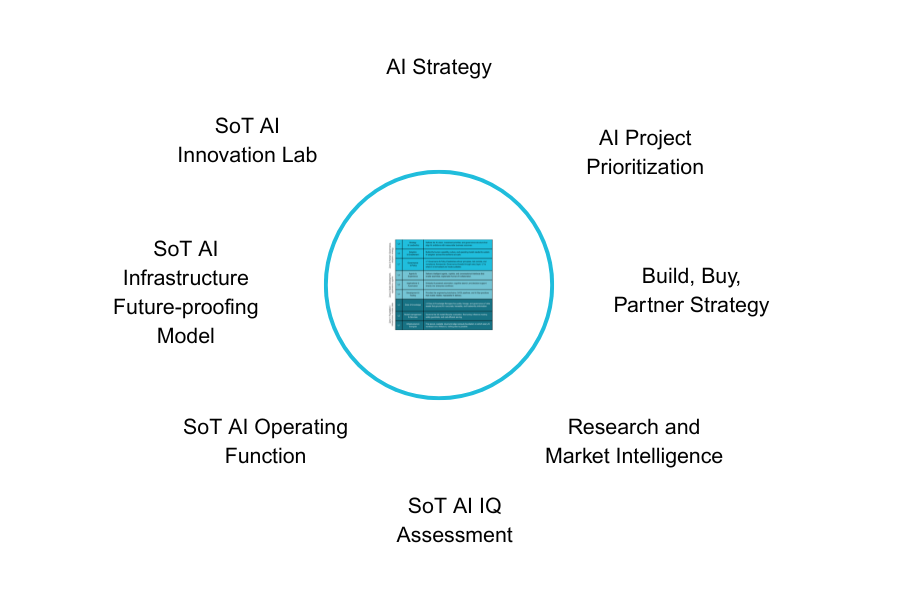

Knowing where you are is the beginning, not the end. The SoT AI Enterprise Reference Model™ is a diagnostic structure. What sits on top of it is the AI Operating Function, the four-pillar operating model that connects the nine layers to business outcomes.

The four pillars are Direction, Prioritization, Governance, and Execution.

Direction means defining and maintaining the AI vision and portfolio investment strategy. It is the answer to: what are we trying to achieve with AI, and how are we allocating resources toward it? Without direction, the other three pillars have no anchor.

Prioritization means evaluating and sequencing AI opportunities with rigor. It is the answer to: of everything we could do with AI, what should we do first and why? This is where the nine-layer model connects directly to use case decisions: a use case is only genuinely ready to execute when the layers it depends on are sufficiently mature.

Governance means establishing the risk, compliance, and accountability structures that make AI trustworthy. It is the answer to: who is accountable for AI decisions, how do we ensure they are made responsibly, and what happens when something goes wrong? Governance is not a separate workstream. It is the operating condition that makes the other three pillars sustainable.

Execution means building, deploying, and scaling AI capabilities with reliability. It is the answer to: can we actually deliver what the strategy calls for, at the quality and speed the business needs? Execution is where the Foundation zone of the nine-layer model becomes most visible. You cannot execute reliably on weak infrastructure, ungoverned data, or immature development practices.

Direction without Governance creates reckless speed. Execution without Prioritization creates waste. Governance without Direction creates paralysis. The AI Operating Function only works as a complete system.

Most organizations that struggle with AI have gaps in two or more of these pillars. Direction without Governance creates reckless speed: capable teams building things the organization is not ready to be accountable for. Execution without Prioritization creates waste: high activity, diffuse impact, and a portfolio of pilots that never scale. Governance without Direction creates paralysis: rigorous risk management applied to an unclear strategy, which means rigorous management of the wrong things. Prioritization without Execution creates a compelling roadmap that sits on a slide because the delivery capability to realize it does not exist.

The AI Operating Function only works as a complete system, which is exactly why the nine-layer model underneath it must be complete too. A gap in the model creates a gap in the Operating Function. A gap in the Operating Function means value does not get delivered.

A dedicated post on the AI Operating Function is coming soon in this series.

Every recommendation we make, from what to build to what to fix first, is grounded in this model.

The SoT AI Enterprise Reference Model™ is not a slide we show at the beginning of an engagement. It is the operating system we run on. Here is what that looks like in practice.

Build, buy, or partner: a capability question, not a preference.

When a client asks us whether to build a capability, buy a tool, or partner with a vendor, most advisors answer based on market knowledge or vendor relationships. We answer by looking at the client’s layer maturity.

The build-buy-partner decision involves three layers primarily. Layer 2 maturity tells us whether the client has the model management capability to operate and maintain a custom-built AI system responsibly. Layer 4 maturity tells us whether they have the engineering toolchains, CI/CD discipline, and AI Ops practices to build and deliver reliably. Layer 6 maturity tells us whether they have the agent architecture and experience design capability to create AI interactions that actually work for users.

A client with low Layer 4 maturity has no business building custom AI systems from scratch, regardless of how compelling the business case looks. The capability to deliver does not exist, and building on an absent foundation creates fragility, not capability. That client should buy a proven solution and use the engagement to build Layer 4 maturity for the future.

A client with strong Layer 2 and Layer 4 maturity and a well-governed Layer 3 data foundation may be ready to build precisely the bespoke capability their competitive position requires. The model tells us which situation we are in. The recommendation follows from the evidence, not from the advisor’s preferences.

Use case prioritization: what you are ready for, not what looks exciting.

The most common failure pattern in AI portfolio management is prioritizing use cases based on business value alone. A use case can have compelling ROI, enthusiastic sponsorship, and strong strategic alignment, and still be the wrong thing to build next, because the layers it depends on are not sufficiently mature to support it.

A use case that requires high data quality, rich lineage, and a governed knowledge base to function reliably, such as an AI-powered clinical decision support tool in healthcare, is not viable for a client with a Layer 3 maturity score of 1.5. The model does not just tell you that. It tells you exactly what layer investment is required before that use case becomes executable, creating a natural sequencing logic: build the foundation before building on it.

Conversely, a use case with more modest data requirements and well-understood workflow integration can be a strong first move precisely because the organization is ready for it. Early wins build the organizational confidence and capability for more ambitious use cases later. The nine-layer model is the lens that makes that sequencing principled rather than political.

Research and market intelligence: organized by layer, not by news cycle.

The AI market moves faster than almost any other technology domain. New models, new frameworks, new vendors, new regulatory developments, and new enterprise deployment patterns emerge continuously. Without a structuring principle, staying current is an overwhelming task that produces noise rather than intelligence.

The nine layers are our organizing taxonomy. An advance in model evaluation methodology is a Layer 2 development. A new agentic framework is a Layer 6 development. A regulatory change in AI governance is a Layer 7 development. A new approach to enterprise RAG pipelines is a Layer 3 development. Every piece of market intelligence gets tagged to its layer, which means it automatically connects to the clients and engagements where it is most relevant.

This is how we ensure our research is always actionable rather than merely current.

AI strategy: every section grounded in data, not in opinion.

When we build an AI strategy for a client, every section of the strategy document has a defined source in the nine-layer model. Nothing is written from blank paper.

Vision and goals come from Layer 9 assessment data, specifically the client’s current maturity across vision, investment, and governance sub-areas and the gaps that most constrain strategic ambition. Governance principles come from Layer 7 maturity scores and the specific compliance and risk sub-areas where the client is most exposed. Build-buy-partner recommendations come from the intersection of Layer 2, Layer 4, and Layer 6 maturity scores. The roadmap integrates all nine layers, sequencing capability investment against use case readiness in a way that is grounded in evidence rather than consultant judgement.

The result is a strategy document where every recommendation is traceable back to a specific data point in the assessment. A client can ask why we recommended buying rather than building for a specific capability, and we can point to the Layer 4 maturity score that made building premature. That traceability is what separates a strategy that earns confidence from one that earns a polite nod.

The most useful thing this model does is show you what is missing. Here are the questions worth asking.

The single most useful thing the nine-layer model does for an organization is reveal what is missing. Not in theory, but in your specific context, with your current capabilities, investments, and gaps.

If you lead operations or run a business unit:

- How many of your AI pilots have made it into a production workflow your team depends on daily? If the number is low, the constraint is almost never the technology. It is Layer 8: the adoption, change management, and operating model conditions that determine whether people actually change how they work.

- When an AI system in your operations produces a wrong or unexpected result, how quickly can you explain what happened and to whom? If the answer is that you cannot, you have a Layer 7 gap, and it will matter the moment a regulator, auditor, or customer asks the question.

- Can you name three specific operational outcomes, such as cost, throughput, error rate, or cycle time, that your AI investments are expected to move in the next 12 months? If not, you may have a Layer 9 gap: AI activity without an outcome mandate.

If you lead AI, technology, or digital transformation:

- Layer 3 first: how confident are you in the quality, lineage, and governance of the data your AI systems are trained and grounded on? More AI initiatives fail here than anywhere else, and most organizations do not find out until a model produces a result that cannot be explained or trusted.

- Layer 7 before Layer 5: do you have the governance and compliance frameworks in place before you embed AI into business workflows? Automating a process with AI before governance is in place does not accelerate value. It accelerates risk.

- Layer 9 as a working foundation, not a destination: you do not need a perfect AI strategy. You need one that is clear enough to make the next three prioritization decisions without politics, defensible enough to take to the board, and stable enough that your teams are not re-litigating the vision every quarter.

- Zone 3 before claiming scale: have you addressed adoption and culture as seriously as you have addressed technology? The most sophisticated AI system in the world does not create value if the people it is built for do not trust it, understand it, or use it.

If any of those questions made you uncomfortable, you are not alone. The gap between where organizations think they are and where they actually are, particularly in the upper layers, is one of the most consistent findings in enterprise AI.

The good news is that a clear picture of where you are is the beginning of a clear path forward. You cannot fix what you cannot see.

Find out where your organization actually stands, then let’s talk about what to do about it.

Together, Part 1 and Part 2 of this series have introduced the SoT AI Enterprise Reference Model™, the logic behind its nine layers, and the way it drives every decision, recommendation, and strategy we produce at Strategy of Things.

The full nine-part series go layer by layer, with one post per layer unpacking what it encompasses, what maturity looks like at each level, the most common failure patterns, and how it connects to the layers above and below it. The first post in the series covers Layer 1: Infrastructure and Compute.

If you want to know where your organization actually stands across the nine layers, the AI IQ™ Assessment is the fastest way to find out. The Quick Scan covers the four AI Operating Function layers and takes around 20 minutes. The Full Assessment covers all nine layers and 72 sub-areas and forms the basis for a complete AI readiness diagnostic.

For operational and executive leaders who are not sure where their organization’s AI effort is actually working and where it is fragile, the Quick Scan is designed to start that conversation. Not as a technology audit, but as an honest view of where structure is present and where it is absent.

This article is part of a continuing series aimed at providing senior leaders and managers with a practical working knowledge of artificial intelligence and how to manage it as a business capability. This article was originally published at Strategy of Things AI (SoT.ai) website.

Thanks for reading this post. If you found this post useful, please share it with your network. Please subscribe to our newsletter and be notified of new blog articles we will be posting. You can also follow us on Twitter (@strategythings), LinkedIn or Facebook.

Related posts:

The Secret to AI Success Isn’t the Right Tools. It’s the Right Model.

The AI Build–Buy–Partner Decision: A Strategic Framework for Executives

AI Is Everywhere. Enterprise Impact Isn’t. Here’s the Structure That Closes the Gap.