This is the second in a series of blogs on the convergence of computer vision with the Internet of Things. Part Two provides an overview of edge processing or fog computing. When combined with IoT, it will enable some of the revolutionary advancements in computer vision. In subsequent parts, we will explore new frameworks, best practices and design methodologies for computer vision-IoT convergence.

Cloud computing is a critical component of the Internet of Things (IoT) architecture. Data collected from the sensors and devices is transferred to a platform in the cloud. It is then aggregated, normalized, and processed according to pre-defined rules, and acted upon. However, the cloud model is not ideal for real time and mission critical applications. Take the example of a high volume continuous manufacturing process. Once the sensors detect an anomaly, the system must take corrective action immediately, otherwise the defect will propagate. The time from detection to correction must be in seconds. In the case of a self-driving car, the response time must be in milliseconds. For these applications, the round trip from device to gateway to the cloud and back takes too long. A different architecture is needed where the data collection and processing is closer to the devices (or edge).

Cloud computing models for IoT

IoT powered by PaaS and SaaS

Cloud based Platform As a Service (PaaS) and Software As a Service (SaaS) technologies are commonplace now, as enterprise applications have been steadily migrating out of corporate data centers. In recent years, PaaS and SaaS are also the major enabling models of the IoT market across a wide range of use cases.

PaaS providers offer ready-to-use platform services like security, data storage, device management and big data analysis. SaaS providers deliver application level services like billing, software management and visualization tools. Google IoT Core, Microsoft Azure and AWS IoT are examples of PaaS/SaaS platforms.

Advantages of PaaS and SaaS

PaaS/SaaS platforms are important for the success of many small IoT startup companies.

- Product companies can develop and deploy applications quickly. What used to take months can be done in weeks.

- Companies can scale as needed, with less startup cost, while they are validating new products in new markets.

- Companies no longer need to maintain their own data center. Those platforms usually provide better reliability and up time than individual product companies can achieve, meanwhile reducing operation overheads.

As a result, IoT product companies can focus on perfecting design, enhancing user experience and delivering better products for their specific use cases.

Disadvantages of the Cloud Service Model

In a pure cloud-centric model, all raw data is aggregated and streamed to the cloud for storage and processing. Despite the advantages, the model has some major drawbacks:

- Unpredictable response time from cloud server to endpoints

- Unreliable cloud connections can bring down the service

- Excessive data can overburden infrastructure

- Privacy issues when sensitive customer data are stored in the cloud

- Difficulties in scaling to ever increasing number of sensors and actuators

The drawbacks of cloud-centric design makes it unsuitable for the more mission critical industrial, healthcare, and military applications.

Fog Computing for IoT

Industrial Internet of Things

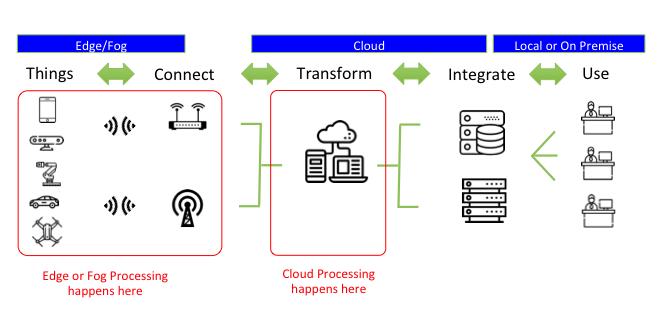

Fog computing, sometimes also known as edge computing, is ideal in Industrial Internet of Things (IIoT) applications. The architecture uses local computing nodes, between the endpoints (e.g. sensors, cameras, etc) and cloud data centers, to gather, store and process data instead of using a remote cloud data center (Figure One).

Connected devices send data and receive instructions to and from a nearby node, usually installed on premises. The node could be a gateway device, such as a switch or router, that has the extra processing and storage capabilities. It can receive, process and react in real time to the incoming data.

Standardization

As the applications and vendors of fog computing grow, data and interface compatibility becomes an issue. The lack of interoperability between products in the industry will hinder the adoption of the technology.

The OpenFog consortium was founded in 2015. It is backed by companies like Cisco, ARM, Dell and Microsoft. They are driving standards and best practices in Fog Computing system design (Figure Two). Their goal is to facilitate adoption of cross industry standards and frameworks.

Growing Fog Computing Use Cases

Mission Critical Applications

In addition to IIoT, consumer facing IoT applications also become more sophisticated and mission critical. In the first wave of consumer IoT applications, the industry and consumers explore interesting use cases and hypes. They are often less demanding (e.g. changing the color of light bulbs).

However, as the IoT market matures, IoT will become the backbone of infrastructures that support important activities in people’s daily lives. The status quo is not enough. Reliability and real-time response will be essential.

Automated Driving System (ADS) is one of these examples. ADS employs multiple advanced technologies, including multi-modal sensors, computer vision, artificial intelligence and machine learning. The system performs data fusion, image analysis, mapping and predictions to determine the best action and controls for the drive-train.

This all needs to be done reliably in milliseconds without interruption. The data bandwidth and latency requirements mandate a powerful processing node in the car, with built-in redundancy.

Intelligent IoT Applications

Besides ADS, artificial intelligence (AI) and computer vision applications will also lead to increased demand for fog computing. An intelligent IoT system does not just collect and analyze data for human consumption alone. It needs to respond to situations without human intervention.

To achieve that, it performs real-time AI inference, using data from a large number of sensors. It then sends commands to actuators in machines, drones or robots to carry out actions. In an unsupervised setting, the AI engine also collects the real-time results to evaluate the next actions to take.

We need a hybrid fog/cloud model, where edge processing nodes handle time sensitive computer vision and AI interference tasks. In addition, cloud nodes handle non-real-time or soft real-time functions like software update, contextual information collection and long term big data analysis.

GPU – The Dominating Machine Learning Platform

The state-of-the-art AI systems uses technologies like Deep Neural Network (DNN). Most of the best DNNs have deep network structures (many layers of nonlinear processing units) to achieve higher accuracy.

However, the implementations usually demand a high volume of data movement and a large number of compute units. Machine learning and AI researchers are turning to Graphics Processing Units (GPU) to handle these workloads. For many years, GPUs were, and still is, commonplace in high performance gaming systems.

Since 2007, Nvidia has developed Compute Unified Device Architecture (CUDA) technology to exploit the power of its graphics chips in compute problems besides 3D shader processing. GPU by design has high data throughput and large number of processing cores. That is very suitable for compute intensive problems like linear algebra, signal processing and machine learning.

The CUDA programming API allows research scientists in many domains, including AI and machine learning, to more easily program and leverage the power of GPU. The availability and continuous improvements of GPU systems in the consumer market make it possible for AI researchers to train and validate designs in a reasonable amount of time and budget. Fast forward to the present, Nvidia’s CUDA platform more or less dominates the machine learning and AI market.

Embedded AI on Edge

For many embedded or mobile systems, typical GPUs are way too expensive and power hungry. In the last few years, companies like Nvidia, Intel, ARM and Apple are putting a lot of effort towards embedded AI system designs. Nvidia’s powerful Tegra processor with CUDA technology is currently the market leader. The Nvidia Jetson platform (Figure 2) is widely used in smart drones and ADS vehicles.

Intel is also actively investing in similar embedded AI technologies, like their recent acquisition of computer vision chip company Movidius. Qualcomm, Mediatek, Huawei, AMD and some startups are also eyeing the rapidly growing market. They are developing neural network capabilities into their future System On Chip (SOC).

These technologies will find their way into market the next few years. Chip vendors are also working closely with software developers to optimize implementations on their processors.

Furthermore, embedded software developers are also looking to optimize neural network architectures that strike the right balance between complexity and accuracy requirements. The requirements are usually very different for different applications and use cases.

One example is face recognition, where the out-of-the-box accuracy and real-time requirements are very different to access control system versus photo tagging applications. The difference results in orders of magnitude difference in processing requirements, and ultimately, system cost.

Conclusions

For the next generation of intelligent IoT systems, fog/cloud hybrid architecture will be the trend. For example, the major cloud service providers are starting to develop and integrate fog computing into their cloud offerings (The Big Three Make a Play for the Fog). They provide important standards, such as those defined by the OpenFog Consortium, and ecosystems for IoT fog solutions to thrive. In designing intelligent fog computing nodes or endpoints, we will see an increasing number of embedded processing platform choices in a few years.

Moving forward, engineers need to employ domain specific algorithms and neural network designs to deliver products within budget in short time-to-market and meet usage requirements. We will explore some of the application domains in future posts.

This article was written by Frank Lee. He is the co-founder and CEO of DeepPhoton Inc, and is developing a platform that empower IoT devices with cognitive technology. He has over 20 years of experience in leading product developments in IoT platforms, media processing, computer vision, cloud software. Frank is an enthusiast in computer vision, IoT, and machine intelligence.

Thanks for reading this post. If you found this post useful, please share it with your network. Please subscribe to our newsletter and be notified of new blog articles we will be posting. You can also follow us on Twitter (@strategythings), LinkedIn or Facebook.

Related posts:

The Convergence of IoT with computer vision is poised to disrupt

Back to Basics – Sensors for IoT applications

IoT innovation is not in the technology, but in what it can do

Innovation is not just about technology – the five types of innovation all managers must know